Boost Your Rendering Performance with content-visibility

Developers don’t typically prioritize rendering performance when they aim to improve and optimize their websites.

After all, there are other optimizations such as improving server response times, reducing file sizes, and prioritizing the file loads that provide immediate visible improvement. Also, rendering is an internal process of browsers that web developers do not have direct access to.

However, developers should focus on rendering for three primary reasons:

- Rendering is an integral part of how modern websites work. It is a blocking operation, therefore it blocks all user interactions

- The results of rendering performance are incredibly apparent in mobile devices, especially the lower end ones

- There are direct and indirect ways to help browsers render our content more efficiently

Influencing browser rendering processes is now even easier to do, thanks to the new CSS content-visibility property. Essentially, this property changes the visibility of an element and manages its render state.

It is somewhat similar to display and visibility properties that already exist. However, content-visibility operates differently than these do.

In this blog post, we'll learn about content-visibility, how it helps with rendering performance, and. how it compares to display and visibility properties.

How content-visibility improves rendering performance

The key capability of content-visibility is that it allows us to postpone the rendering of the HTML elements of our choice. By default, browsers render all the elements within the DOM tree that can be viewed by the user.

Users can see the elements that fit into their viewport and view the other elements within the page by scrolling. Rendering all the elements at once allows the browser to calculate the dimensions of a page correctly while keeping the page layout and the scrollbar consistent throughout the page.

If the browser didn't render some of the elements within the page, scrolling would be a nightmare because it wouldn’t be possible to calculate the page height correctly. Or would it?

Fear not. content-visibility has an auto option that detects whether an element is within the viewport of the user and skips the rendering for the elements that haven’t yet enter the viewport yet.

This makes sense because the user will not initially see the elements that stay outside of their screen, meaning that these elements are unnecessary during the initial page load. So, postponing rendering these elements reduces the initial rendering time so a user can see the content faster.

Measuring the power of content-visibility

Sure, it all sounds good in theory, but let’s dig deeper. So, to leverage the power of content-visibility and measure the benefits as accurately as possible, I've put together a demo blog.

A blog is a good test case for this because it has text, images, and various other HTML elements. Blog pages tend to be long, so there is often some content below the fold that can benefit from delayed rendering. And the content is usually static, so we don’t need to account for any dynamic content being loaded that can affect our metrics. We can simply focus on the initial page load.

With these ideas in mind, I've modified the blog to create two different versions of it with the same content, except for one important difference: one of them has content-visibility: auto turned on.

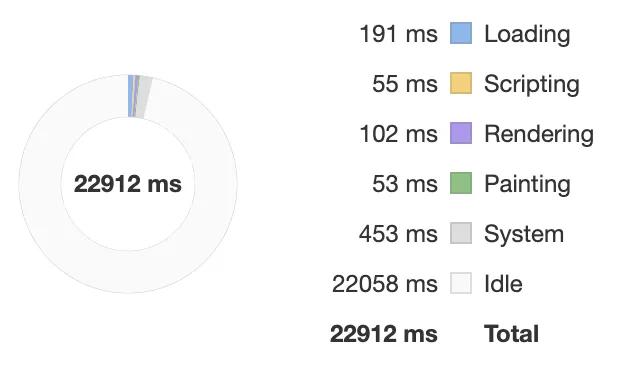

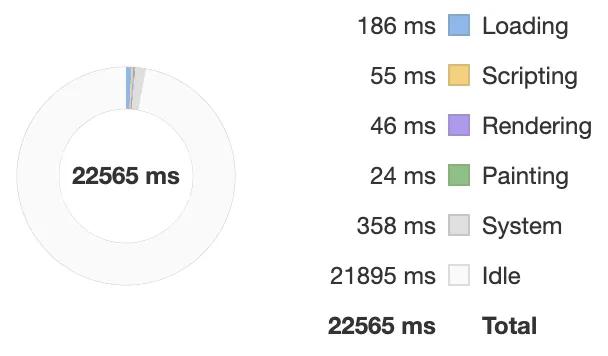

I also decided to run the benchmarks on Chrome's low-end mobile phone emulation. There is simply too much processing power on a modern high-end laptop to see the difference between the two versions clearly. I ran multiple benchmarks with similar results. Below, you can see an example benchmark for each version of the blog.

The benchmarks show that the rendering takes about 50MS shorter when content-visibility is used. It is quite an improvement that mobile users would appreciate.

Both versions of the blog are available online so you can run the benchmarks yourself as well:

How to implement content-visibility

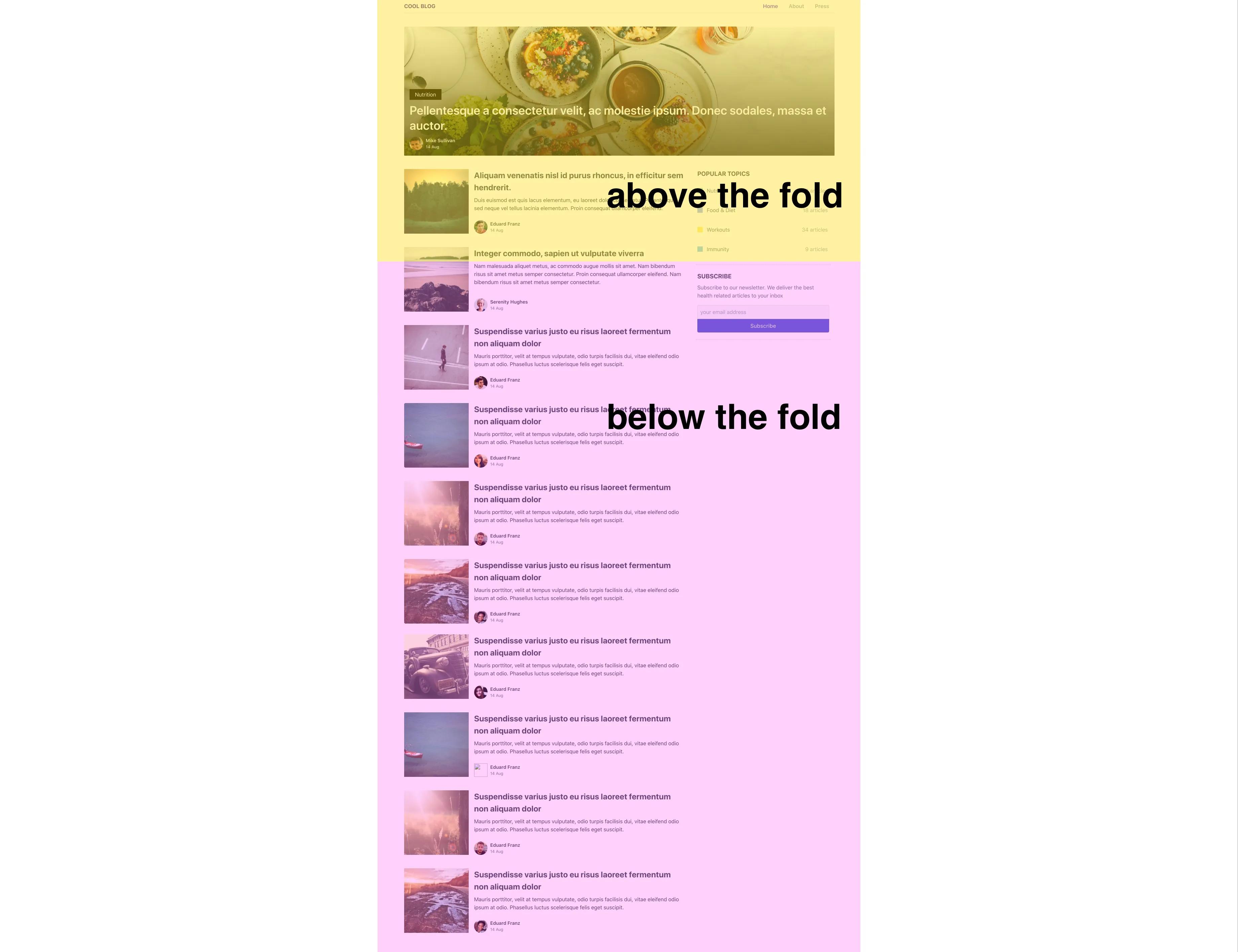

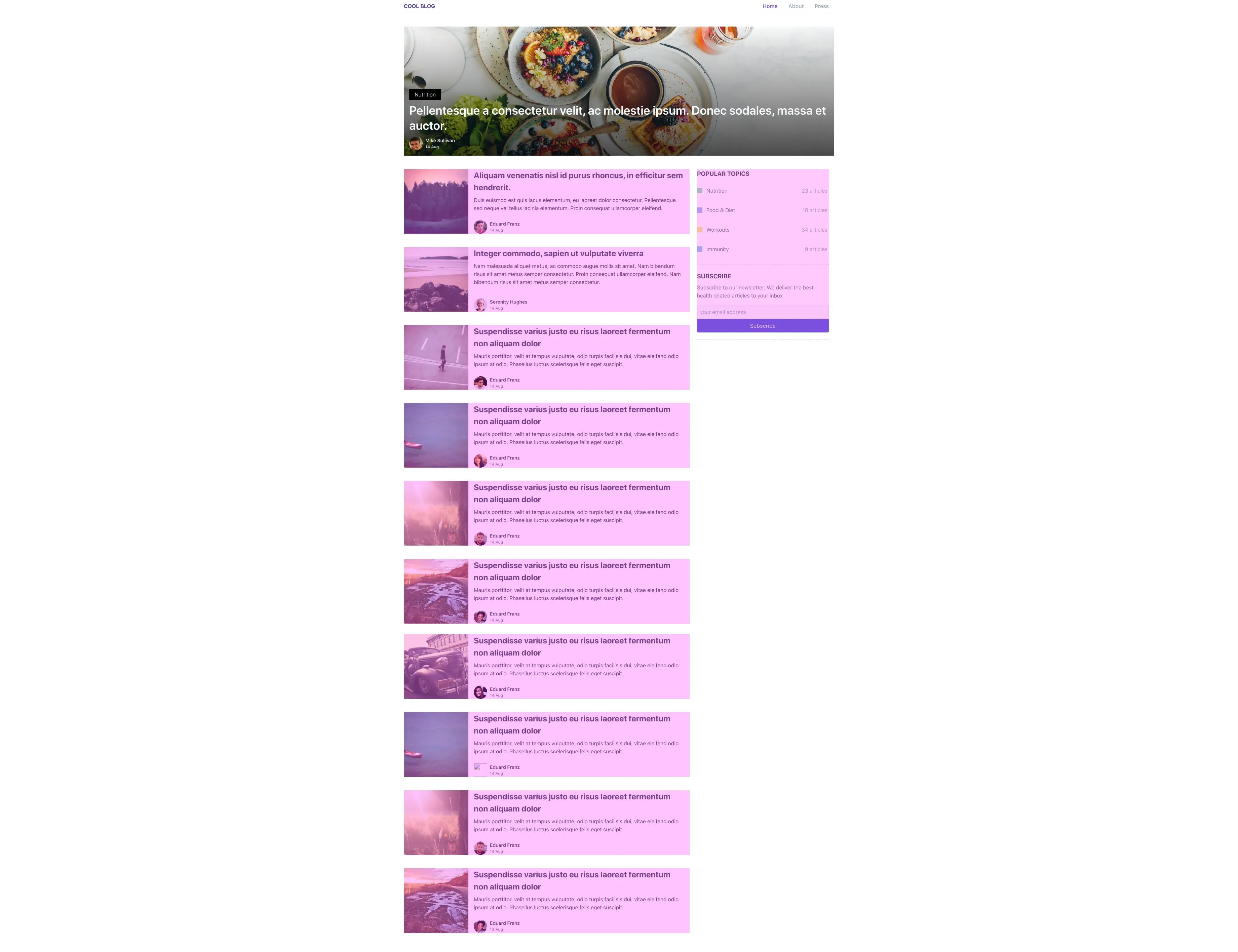

Reaping the benefits of content-visibility isn't hard. We first start by identifying the parts of the page we want to use content-visibility on. On the screenshot below, you’ll see that I've identified the content that is visible to the user immediately (i.e., above-the-fold content) and the content that is reachable by scrolling. Postponing the rendering of the below-the-fold content would reduce our initial rendering time.

Setting content-visibility: auto for the below-the-fold content would trigger the render optimization functionality where the browser postpones the rendering for that content until it is visible. Here’s the code to do so:

.below-the-fold {

content-visibility: auto;

}

This achieves the rendering behavior we want but has one small issue. Remember how I mentioned that rendering all the content at the beginning was needed to ensure that the page height was calculated correctly and to keep scrolling consistent? Now we have this issue here.

By default, content-visibility will treat the height of the element it's assigned to as 0. The browser will make this element invisible by making its height 0 until it is rendered, which messes with our page height and scrolling.

But this behavior is overridden if there is already a height assigned to the element or its children elements, so this isn’t an issue if your below-the-fold elements already have height properties set.

If you don’t have height properties in your elements and do not want to add them because of possible side effects, you can use the contain-intrinsic-size property to make sure the elements are rendered correctly while also keeping the benefits of delayed rendering. With this, we end up with a code like below:

.below-the-fold {

content-visibility: auto;

contain-intrinsic-size: 240px;

}

The value 240px you see there is just an example. That should be replaced with the actual height of the element we'll use content-visibility on.

Unfortunately, calculating a single value for the whole below-the-fold content is difficult. The page can be long or short based on the items shown on that page. So, we'll add this property to the elements that have a predictable height. For example, the articles on our example blog have a pretty standard look.

Each article in the blog has 468px height. Now we can complete our example case by setting contain-intrinsic-size to 468px.

.below-the-fold {

content-visibility: auto;

contain-intrinsic-size: 468px;

}

Advanced usage of content-visibility

The auto option certainly does wonders, but it’s possible to take things a step further. content-visibility provides us with two more potential values called hidden and visible. These values do what you would expect them to do and hide or show the element that content-visibility is assigned to. These values can be useful for advanced use cases.

One such case would be showing/hiding elements programmatically, similar to how the display property is used. In this case, content-visibility can improve the rendering performance for items that are shown or hidden frequently, such as modals or pop-ups. content-visibility can provide this performance boost thanks to how its hidden value functions differently than others.

How content-visibility: hidden compares to alternatives

display: none: This completely hides the element and destroys its rendering state. When we want to show the element again, the browser has to re-render it, which is expensive.

visibility: hidden: This simply makes the element invisible. The browser can re-render it when it deems necessary, even if the element is hidden. The element and its children also keep a visibly empty space in the page.

content-visibility: hidden: This hides the element but keeps its rendering state. This means the element behaves as it does on display: none, but the cost of showing it again is much lower.

Of course, this doesn't mean we don’t need display or visibility anymore. They still have their use cases, but now, we have an additional tool to leverage.

Browser support for content-visibility

Because content-visibility is still in the working draft stage, its support will come a bit slower than others. Currently, only Chrome and Edge version 85 support it. This means it will take a bit longer for us to get the full benefits of implementing this optimization.

On the flip side, the auto functionality of this CSS property is purely for performance optimization. It doesn't cause any visual change. When it is not supported by a browser, it will simply be ignored without causing any negative impact, so it’s safe to implement for all browsers and observe the behavior as it gets supported by more browsers.

You can check the current browser support here.

Conclusion

content-visibility promises solid gains for very little work. The auto functionality is something we can implement today and start reaping benefits from. More advanced usage of it as a replacement for display or visibility, however, has to wait until content-visibility becomes supported by most modern browsers.

Give content-visibility: auto a try and let us know how it performs for you!

Use AI to Query Your Databases in Plain English — A Practical Guide with OpenClaw

Use AI to Query Your Databases in Plain English — A Practical Guide with OpenClaw

Last week I asked my AI assistant to count the records in my production database. It made a backup first, opened a read-only connection, ran the query, and gave me the answer. The whole thing took about 30 seconds.

That sentence sounds like a sales pitch. It is not. It is the exact thing I did while debugging a bug in a small Next.js app I run on Coolify. I did not open a SQL client. I did not SSH in and poke around. I asked a local AI agent, and it gave me an answer, along with a safe, auditable trail of what it did.

This post is a practical guide. I show what worked for me, why it works, and how to do the same without making your database less secure. If you manage your own databases and want the convenience of plain English queries without the friction of switching contexts, this will be useful.

What is OpenClaw?

OpenClaw is an open source AI agent platform that runs where you run your stuff. It is not a hosted SaaS. It runs on your machine or server, and it can be given access to your filesystem, shell, and the tools installed there. That means it can run sqlite3, psql, or mysql, read files, copy things, and follow rules you give it.

If you want to explore the project, check the repo at https://github.com/openclaw/openclaw and the docs at https://docs.openclaw.ai. The important bit is this. Because OpenClaw runs in your environment, you control the permissions. That is what makes using an agent to query a database practical, safe, and auditable.

Why this works, and why it is not as crazy as it sounds

A few things line up to make this useful.

- Large language models are remarkably good at translating plain English into SQL for common queries. For counts, group bys, top-k queries, and simple joins, the translations are reliable.

- OpenClaw can run shell commands. That means the agent can execute

sqlite3,psql, ormysqlclients directly. It does not need magical database credentials embedded in the model. It uses the same tools you use. - You control the environment. You decide which database the agent can reach, what user it uses, and whether the connection is read-only.

- This is about saving time, not replacing your DB admin. If you need a production migration, complex performance tuning, or schema redesign, those are still human jobs. For quick lookups, debugging, and small ad hoc analysis, an agent is a force multiplier.

Database support

OpenClaw is not tied to one database engine. If you can reach your database from a terminal, the agent can talk to it.

- SQLite. The simplest case, a local file. You can open it read-only using URI flags. See https://www.sqlite.org/uri.html for details about

?mode=ro. - PostgreSQL. You can run

psqldirectly, or use Python withpsycopg2if you want richer control. - MySQL and MariaDB. Use the

mysqlclient. Connection strings, env variables, or mounted credential files work. - Remote databases. SSH tunnels, Tailscale, or a VPN let the agent reach databases behind a firewall.

- Cloud-managed databases. RDS, Cloud SQL, PlanetScale, Supabase, and similar services work as long as the agent can connect.

In short, if you can connect from the shell on the machine OpenClaw runs on, OpenClaw can query it.

The real example: my production SQLite database

This is the story I mentioned at the top. I run a small Next.js app deployed with Coolify. The app stores designs in a SQLite database located at a path like /data/coolify/applications/awesome-app/designs.db.

I needed a quick count of the designs table. Instead of SSHing in and running sqlite3 by hand, I asked OpenClaw. This is roughly how the interaction went. I am paraphrasing and simplifying for clarity.

User says: "List the tables in /data/coolify/applications/awesome-app/designs.db"

Agent does: "I will not modify your file without confirmation. Do you want me to proceed?"

User says: "Yes, please proceed."

Agent does: "Making a timestamped backup at workspace/backups/designs.db.2026-02-12T14-03-21.sql"

Agent does: "Opening the database in read-only mode and listing tables."

Agent returns: "tables: designs, users, migrations"

User says: "Count the records in the designs table"

Agent does: "Confirmed. I will open the database read-only and run a count query."

Agent does: "Running: SELECT COUNT(*) FROM designs;"

Agent returns: "453 records in the designs table"

Agent saves results to: workspace/results/designs_count_2026-02-12.json

[IMAGE: Screenshot of a terminal or chat showing the natural language query and the agent's response with the SQL result]

A few details worth calling out.

- The agent asked for explicit confirmation before touching a production file. Do not skip this step in your setup.

- The agent made a timestamped backup. I prefer backups to be automatic for anything touching production, even read-only operations.

- It opened the database in read-only mode. For SQLite that is done with the URI flag documented at https://www.sqlite.org/uri.html. That adds an extra safety layer.

- It saved the results to a JSON file in my workspace. I can inspect, version, or share that file without re-hitting the database.

That interaction saved me five minutes of SSH and copy-paste. It also left an auditable trail. The agent recorded what it ran and why. That trace matters when you are working with important data.

Security and impact radius, the important part

This is the serious section. Giving an agent access to a database is powerful, and power needs guards. I group the safeguards into authentication, read-only enforcement, recommending replicas, network isolation, and backups.

Authentication

If OpenClaw runs on the same machine as the database, it often already has file-level access. That was the case for my SQLite example. For remote databases you have choices. Use SSH tunnels, environment variables for credentials, or mounted key files. Never hardcode passwords inside agent prompts or configuration files.

Read the agent configuration docs at https://docs.openclaw.ai for recommended patterns. Keep secrets out of chat history and use environment variables or secret stores where possible.

Read-only enforcement

Defense in depth matters. I use three layers.

- DB level. Create a dedicated read-only database user. For Postgres this means a user with SELECT only privileges. See https://www.postgresql.org/docs/current/sql-grant.html. For MySQL see https://dev.mysql.com/doc/refman/8.0/en/grant.html.

- Connection level. Use SQLite’s

?mode=roURI parameter, see https://www.sqlite.org/uri.html. For Postgres you can start a session withSET TRANSACTION READ ONLYto make accidental writes fail. - Agent level. Tell OpenClaw to never run data mutation statements. This is a soft guardrail, but it helps. Combine it with the DB-level controls for real safety.

Point to a replica

If you have a read replica, point the agent there. That eliminates any risk of write-side performance impacts or accidental changes.

Cloud providers make this easy. Most managed systems offer a read-only endpoint or a low-cost replica. Use it. You get zero risk to production writes and the same data for reporting.

Network isolation

Keep your database behind firewalls and security groups. If OpenClaw runs on a different machine, use Tailscale or an SSH bastion to connect securely. Do not expose your DB port to the public internet because you want easy AI queries.

Tailscale is a tiny, practical solution for secure, private networking. See https://tailscale.com.

Backup before query

Even read-only operations can go wrong. Maybe a script accidentally runs a mutation. Maybe you misconfigured a path. In my case, the agent made a timestamped copy of the SQLite file before opening it. That is a small step with huge value.

[IMAGE: Diagram showing the security layers: User -> OpenClaw Agent -> Read-Only User -> Read Replica -> Database]

A PostgreSQL example

Here is a realistic example for a Postgres database hosted on Supabase or RDS. I will show the high level steps and the plain English flow you might use.

Setup steps I ran locally for this example:

- Create a read-only user on the Postgres instance.

CREATE ROLE ai_readonly LOGIN PASSWORD 'strongpassword';

GRANT CONNECT ON DATABASE mydb TO ai_readonly;

GRANT USAGE ON SCHEMA public TO ai_readonly;

GRANT SELECT ON ALL TABLES IN SCHEMA public TO ai_readonly;

ALTER DEFAULT PRIVILEGES IN SCHEMA public GRANT SELECT ON TABLES TO ai_readonly;

See https://www.postgresql.org/docs/current/sql-grant.html for more details on grants.

- Make the credentials available to OpenClaw securely, for example as environment variables:

export DB_URL="postgres://ai_readonly:[email protected]:5432/mydb"

-

If the database is private, create an SSH tunnel, or use Tailscale.

-

Ask OpenClaw a question in plain English.

User: "Show me the top 10 users by order count this month"

Agent: "I will run a read-only query against the orders and users tables. Do you want me to proceed?"

User: "Yes"

Agent does: "Running:

SELECT u.id, u.email, COUNT(o.id) AS orders

FROM users u

JOIN orders o ON o.user_id = u.id

WHERE o.created_at >= date_trunc('month', current_date)

GROUP BY u.id, u.email

ORDER BY orders DESC

LIMIT 10;"

Agent returns: (tabular results)

The agent used the connection string provided. It translated the English prompt to SQL, executed it via psql, and returned results. It also saved the raw CSV to the workspace for later inspection.

Saved CSV to workspace/results/top_users_2026-02-12.csv

[IMAGE: Screenshot showing a small table of results from psql in the agent chat]

Advanced patterns

Once you are comfortable with the basics, you can layer on more patterns.

- Schema introspection. Before you ask data questions, ask the agent to list tables and columns. That reduces hallucinations about column names.

- Complex queries. Natural language can express joins, filters, aggregations, and window functions. The agent produces the SQL and runs it. Always do a quick sanity check of the SQL when the question is ambiguous.

- Exports. Ask the agent to export results to CSV or JSON for sharing or analysis elsewhere.

- Scheduling. Use OpenClaw’s scheduling features to run recurring queries. For example, you can ask for a weekly signup count and have the agent email or save the result every Monday at 9am.

All of these are just shell and file operations under the hood. The agent runs a SQL client, writes files, and can call mail, curl, or your preferred notification tool.

Honest limitations

I use this a lot, but I also know where it fails.

- Ambiguous prompts. If you ask "Show me active users" without defining active, the agent has to guess. Define your metrics.

- Large result sets. The agent should add sensible LIMITs. If it does not, add them yourself. Downloading millions of rows into a small VM is not fun.

- Hallucinated schema elements. Models sometimes invent table or column names. Always start with schema introspection: "List tables and columns." That eliminates most mistakes.

- Not a replacement for BI tools. For dashboards, scheduled alerts, and complex visualizations, use tools built for that. This is for quick lookups, debugging, and ad hoc analysis.

- Audit and compliance. An agent can simplify tasks, but some compliance regimes demand stricter access controls and logging. Treat the agent like any other tool that touches data.

Trust but verify. Especially on production.

Getting started checklist

- Install OpenClaw. Follow the docs at https://docs.openclaw.ai.

- Make sure your DB is reachable from the machine OpenClaw runs on, either directly, over an SSH tunnel, or via Tailscale.

- Create a read-only DB user for the agent, if possible. See Postgres grants https://www.postgresql.org/docs/current/sql-grant.html and MySQL grants https://dev.mysql.com/doc/refman/8.0/en/grant.html.

- Start with schema introspection. Ask the agent to list tables and columns before you run queries.

- Start querying in plain English. Keep queries short and unambiguous. Save outputs to files so you have an audit trail.

Conclusion

I have been using OpenClaw as a natural language interface to my databases for a week now, and it is genuinely useful for quick lookups. It does not replace my SQL skills, but it removes a lot of friction. I can get answers fast, and I get an auditable record of what ran.

If you want to try it, the two links to keep handy are the repo at https://github.com/openclaw/openclaw and the docs at https://docs.openclaw.ai.

This approach is practical because it runs where your data runs. You keep control, and with a little care, you avoid the usual security pitfalls. Try it on a read replica or a local copy first. The convenience is addictive, but good safety habits keep it useful.

[IMAGE: Example terminal showing a user asking "Count records in designs table" and the agent returning the count plus a saved JSON file path]

[IMAGE: Architecture diagram showing OpenClaw running in a bastion host, connecting via SSH tunnel to a Postgres read replica]

Sources and further reading:

- OpenClaw repository: https://github.com/openclaw/openclaw

- OpenClaw docs: https://docs.openclaw.ai

- SQLite URI documentation, for

?mode=ro: https://www.sqlite.org/uri.html - Postgres GRANT docs: https://www.postgresql.org/docs/current/sql-grant.html

- MySQL GRANT docs: https://dev.mysql.com/doc/refman/8.0/en/grant.html

- Tailscale for private networking: https://tailscale.com